For decades Pac Man has chomped his way through ghosts without ever questioning why or the socio-political implications of doing so.

According to Fast Company, IBM boffins decided to look at the game that way as part of an experiment in artificial intelligence and ethics with implications.

IBM fellow Saska Mojsilovic is investigating questions of how to “teach our ethical norms of behaviors and morality, but also how to teach them to be fair and to communicate and explain their decisions.”

Already there are some concerns that AI systems will behave inappropriately mostly due to them mimicking humans in other cases the ends justify the means. One AI designed to play games such as Tetris, for instance, found that if it paused the game, it would never lose—so it would do just that, and consider its mission accomplished. You might accuse a human who adopted such a tactic of cheating.

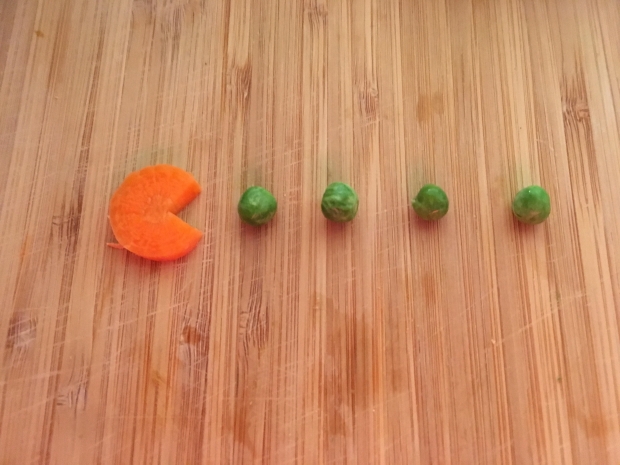

As IBM’s researchers thought about the challenge of making software follow ethical guidelines, they decided to conduct an experiment on a basic level as a project for some summer interns. What if you tried to get AI to play Pac-Man without eating ghosts—not by declaring that to be the explicit goal, but by feeding it data from games played by humans who played with that strategy?

The researchers built a piece of software that could balance the AI’s ratio of self-devised, aggressive game play to human-influenced ghost avoidance, and tried different settings to see how they affected its overall approach to the game. By doing so, they found a tipping point—the setting at which Pac-Man went from seriously chowing down on ghosts to largely avoiding them.

As the AI played Pac-Man, the researchers saw it get smarter about switching off between the two game-playing techniques. When Pac-Man ate a power pellet—causing the ghosts to flee—it would play in ghost-avoidance mode rather than playing conventionally and trying to eat them. When the ghosts weren’t fleeing, it would go into an aggressive point-scoring mode .

IBM has already begun taking steps to instill ethical behavior into shipping software, both with its own products and AI Fairness 360, an open-source tool kit it created for avoiding bias in AI-powered applications such as facial recognition and credit scoring. “We are not only being able to address these problems with research questions, but we are actually now moving them into real-world applications. And that is really big,” Mojsilovic said.